Did you know?

A quick search on Google shows that the page load slowdown by just one second could cause Amazon $1.6 Billion in sales each year. Google could lose 8 millions searches per day if it slows down its search results by four tenths of a second [Tweet this].

Outages, unexpected system failures, downtimes, hardware or software failures are a nightmare for any IT organization. A failure of any application or a system can either result in data loss or temporary/permanent loss of a service. This can affect the existing customers, interfere with the marketing campaigns, generate loss of revenues and spoil the brand name. We don’t want that. Do we?

To prevent businesses from getting impacted, it is important to understand different types of failures, what causes these failures and how they can be prevented.

To begin with, what are the common causes of failures?

- Hardware Failures

Machines or hosts may fail. A distributed application that depends on a single machine may be at a higher risk, when a component fails. A single component failure can cause entire setup to fail.

- Software Failures

Bugs in software can affect the functioning of a component or the entire system as a whole.

For example, a new patch may have some bugs in the code that can cause failure of an existing functionality.

- Upgrades or Maintenance

As the technology is always moving at a fast pace, we need to regularly keep our software and hardware up-to-date. This requires regular and frequent upgrades to the existing systems. Such maintenance or upgrade needs can result in an outage and bring the system to a halt.

- Human Errors

Let’s accept it. All of us make mistakes. Errors or mishaps caused by people cannot be avoided. This can also result in causing a downtime.

To make our systems highly fault-tolerant and available at all times, we need to be resilient to such failures. Adopting a few practices while designing the architecture of the system can prove helpful.

- Removing single points of failures

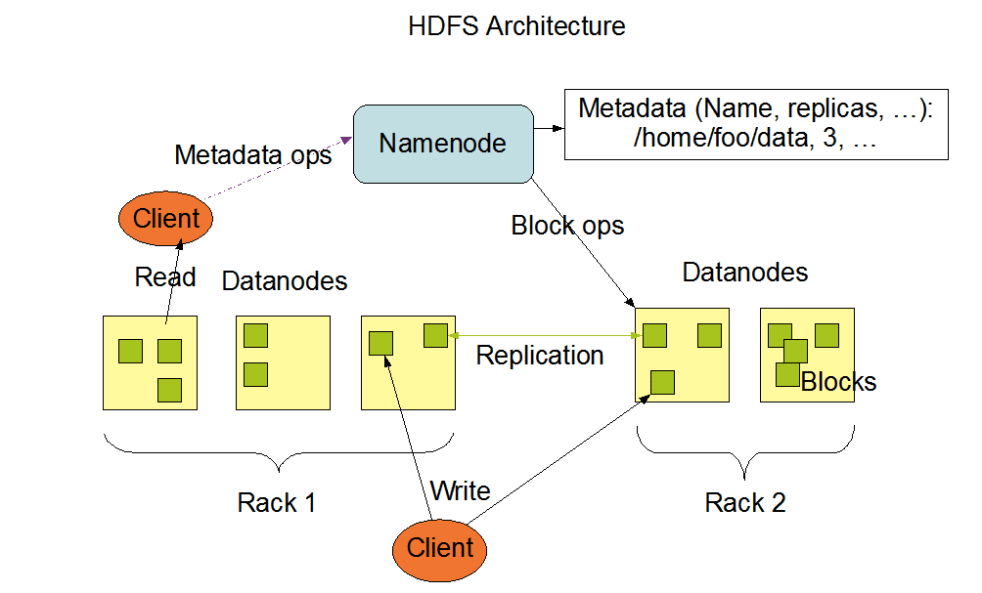

This will ensure that the system continues to run even if one of the machines fails. Hadoop is built to tolerate such failures. Failure of a slave or a data node in the Hadoop system will not affect the application. The system will keep running even though the affected node is not functional. However, failure of the master node or Namenode may result in some downtime. But, Hadoop has mechanisms to recover from this failure quickly.

- Making the system robust to software bugs/errors

Hadoop is designed to tolerate some software bugs. With some error handling implemented in the code, applications can be made robust.

- Incorporate rolling upgrades

To keep software and hardware up-to-date, they need to be frequently upgraded to include new features. Rolling upgrades can be incorporated which will do what is required without bringing the system to a halt. Hadoop supports rolling upgrades.

- Replication of data

One of the important features of Hadoop is that it replicates the data stored to three other hosts. ‘Three’ is the default number that can be modified by the Hadoop administrator if required.

If one of the hosts that contain data fails, or if the data is lost completely on that node, it can be recovered by accessing the node where data had been replicated. The best part about this feature is that Hadoop internally handles data replication and the ability to switch to the node where data is replicated.

- Reproducing the computation

In Hadoop systems, if a node performs slowly or if it does not respond within time, the system starts the same task on another working node. This is done in complete silence and the user is unaware of such failures. The result from the node that completes the task first is considered to be true and the other tasks on slow or dead machines are then killed.

- System should support faster restarts.

Faster restarts can reduce the downtime, if not avoid it.

Failures are unexpected and unpredictable. That is why they cannot be completely avoided even though there have been technological advancements. The above listed features are just some of them that make Hadoop resilient to failures. A number of efforts are on the way to add improvements and additional functionalities to the newer versions of Hadoop. Businesses should build and use systems that are highly available, fault-tolerant and have faster error recovery which cause no or minimum damage. But, don’t let the boat sink!